Artificial intelligence, and in particular generative AI, provide significant opportunities for businesses. However, unlike other advancements in technology, this new technology also raises ethical and cultural challenges, as well as new risks, governance and regulatory considerations.

Here at Fifty One Degrees, we believe that developing a well-considered, people-centric strategy for AI adoption will greatly enhance the success of your initiatives. As an AI Consultant, we will produce your strategy, roadmap and policy for you; an example of our work is below.

For this example AI strategy, roadmap and policy, we have created a fictional client, called River Bank. River Bank is a mid-market UK bank, that has a culture of striving to deliver outstanding customer experience to its consumer, business and vehicle finance customers. There are 2,000 team members across several offices, and it has interest-bearing assets of £7bn, revenue of approx £1bn and makes a reasonable profit. It has grown organically but also by acquisition, and therefore has a complex and, in places, outdated technology stack, making product releases difficult.

River Bank intends to complete a comprehensive Generative AI integration within 12 months, including a full operational-level deployment (see below for more information on this). Your initial Generative AI strategy might be narrower, but we believe you must have a well-considered strategy, irrespective of how broad it is.

When creating a strategy and roadmap for generative AI, there are several strategic questions to consider. You don’t need to answer them all on day one, but it is worth giving them thought.

This is an understandable question that we are asked regularly. Generative AI can be deployed in countless ways, big and small, across every function and every team. So where do you start?

In the most simple terms, we recommend the following process:

However, we believe the adoption of AI will have similarities to the adoption of the internet over the past 25 years, and so the reality is you will cycle through these steps again and again. This mindset is important, as knowing you will repeat the steps makes it easier to get on and complete your first iteration.

There are now many fantastic examples of deploying generative AI to make businesses more efficient and effective. When considering your first PoC, we believe it is worth considering two well-publicised use case examples.

These two approaches are very different. Klarna’s approach was to take one major function and use generative AI to automate two-thirds of it. Whereas, Moderna’s was to democratise generative AI across their business and encourage team members to use the tools to improve their work and increase their productivity, resulting in 750 incremental improvements rather than one big one.

Both of these approaches have merits, but at Fifty One Degrees we’re advocates of starting with Moderna’s, where firms start by using generative to solve many small challenges, as opposed to one major challenge. We recommend this approach for several reasons:

To maximise the benefit of generative AI technologies, you will need to empower your team to use them to their fullest. In turn, this increases the investment required by firms to enable enterprise features, such as:

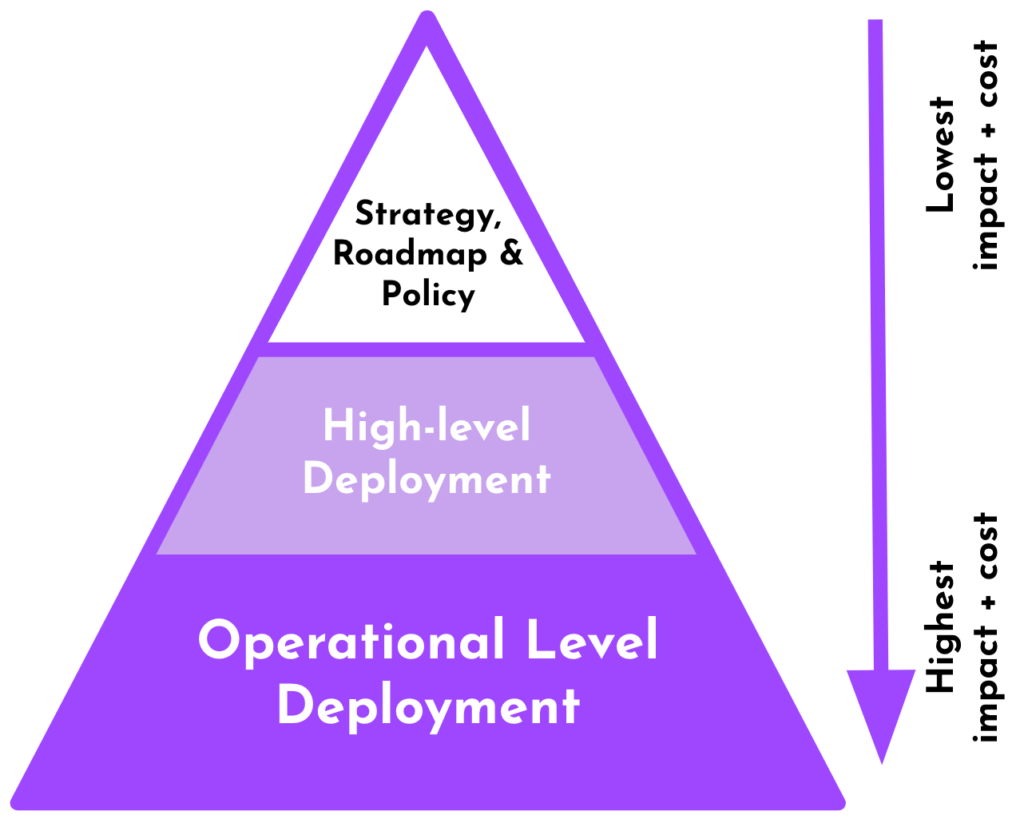

The impact of generative AI will increase exponentially with depth of integration, so firms should aim for full ‘Operational Level Deployment’. For your initial AI projects, though, you will likely stop at ‘High-level Deployment’.

Operational Level Deployment gives any team member the tools required to build their own automation and Assistants, whereas with ‘High-level Deployment’ the team responsible for AI will scope use cases, deploy solutions and monitor performance.

An AI strategy in business outlines the systematic plan to implement artificial intelligence to boost operational capabilities, competitive edge, and market adaptiveness. This strategy details the integration of AI technologies to optimise processes, enhance customer interactions, and innovate product offerings, ensuring these elements align with the broader corporate goals and values.

Essential to any successful AI strategy are a clear vision and well-defined objectives that align with the organization’s overall mission. This involves integrating ethical AI practices, thorough technology assessments to identify and address infrastructural gaps, and robust measurement frameworks to track the effectiveness of AI initiatives against set performance indicators.

AI strategies are specifically designed to support and enhance business goals such as enhancing operational efficiency, improving customer engagement, and fostering innovation. By aligning AI objectives with these goals, businesses can ensure that their AI initiatives not only add value but also propel the company towards long-term success.

Deploying AI involves critical ethical considerations to ensure that the technology is used responsibly. These include ensuring transparency in AI processes, maintaining accountability for AI-driven decisions, safeguarding privacy and data protection, and actively managing biases to promote fairness and non-discrimination in AI applications.

Comprehensive AI training for employees encompasses basic understanding of AI principles, ethical considerations, and hands-on interaction with AI tools relevant to their roles. For those in more technical or decision-making positions, advanced training in data analysis, system maintenance, and troubleshooting is crucial to maximise the benefits of AI integration.

A technological assessment in an AI strategy identifies existing capabilities and gaps, providing a roadmap for infrastructure enhancements necessary for effective AI implementation. This assessment helps tailor AI solutions to the organisation’s specific needs, ensuring optimal integration of AI technologies into existing systems and maximising the strategic benefits of AI investments.

Your AI roadmap should include a phased implementation plan that outlines capability building, knowledge enhancement, and structured deployment of AI technologies. It should detail the establishment of an AI leadership team, talent acquisition strategies, data infrastructure assessments, and initial AI training programs. Additionally, the roadmap should prioritise AI projects that align with strategic goals, assess technical feasibility, and evaluate risk, ensuring ongoing assessment and adaptation based on the AI’s impact on operations and customer satisfaction.

An effective AI policy should govern the development, deployment, and management of AI technologies across your organisation. It needs to outline the purpose of AI use, its scope within the company, and the responsibilities of various stakeholders. The policy should include standards for ethical AI use, ensuring transparency, accountability, fairness, privacy, and security. Additionally, it must address data handling practices, external partnerships, and compliance with regulatory requirements, while setting up mechanisms for monitoring, incident management, and disciplinary actions in case of policy violations.